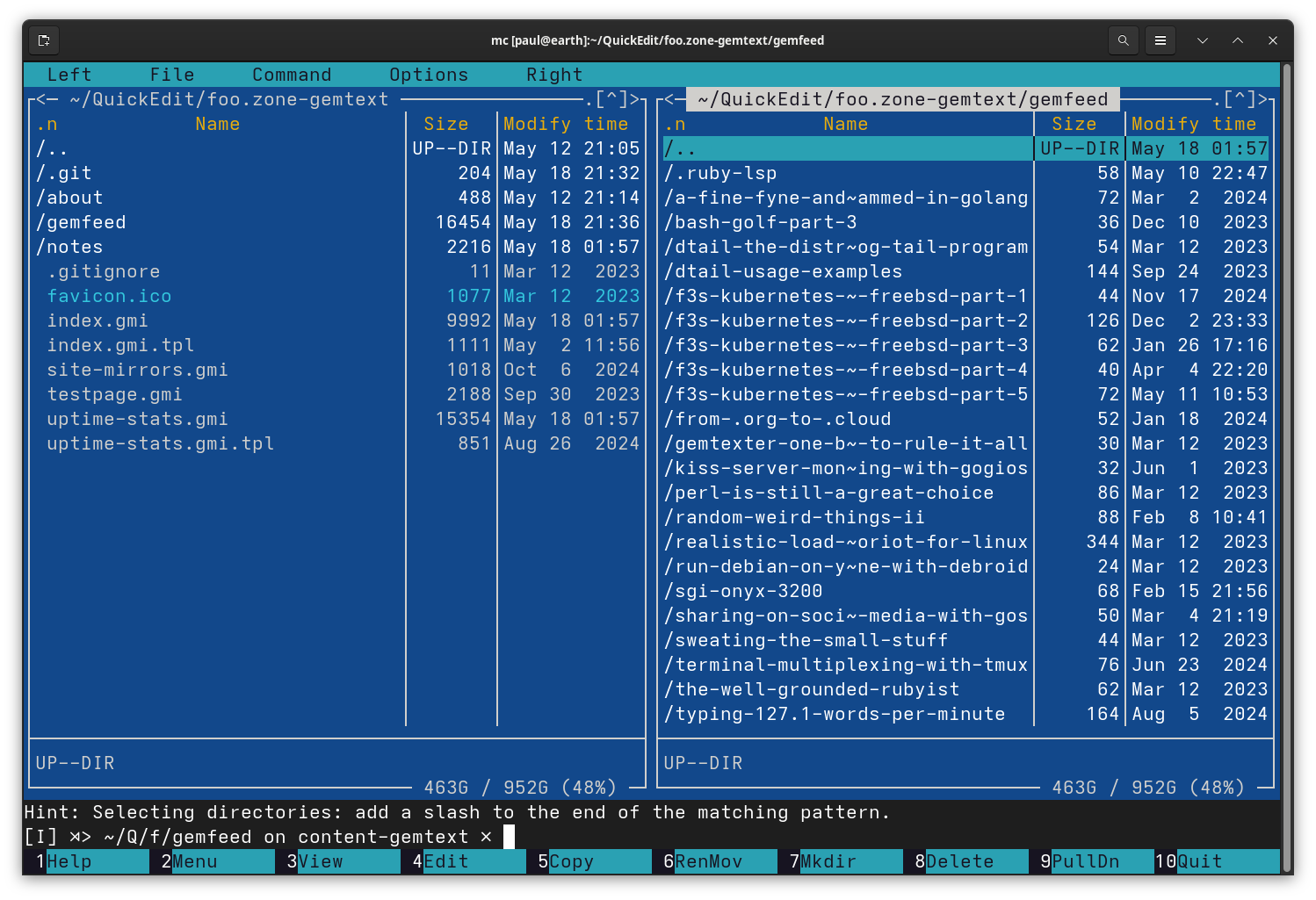

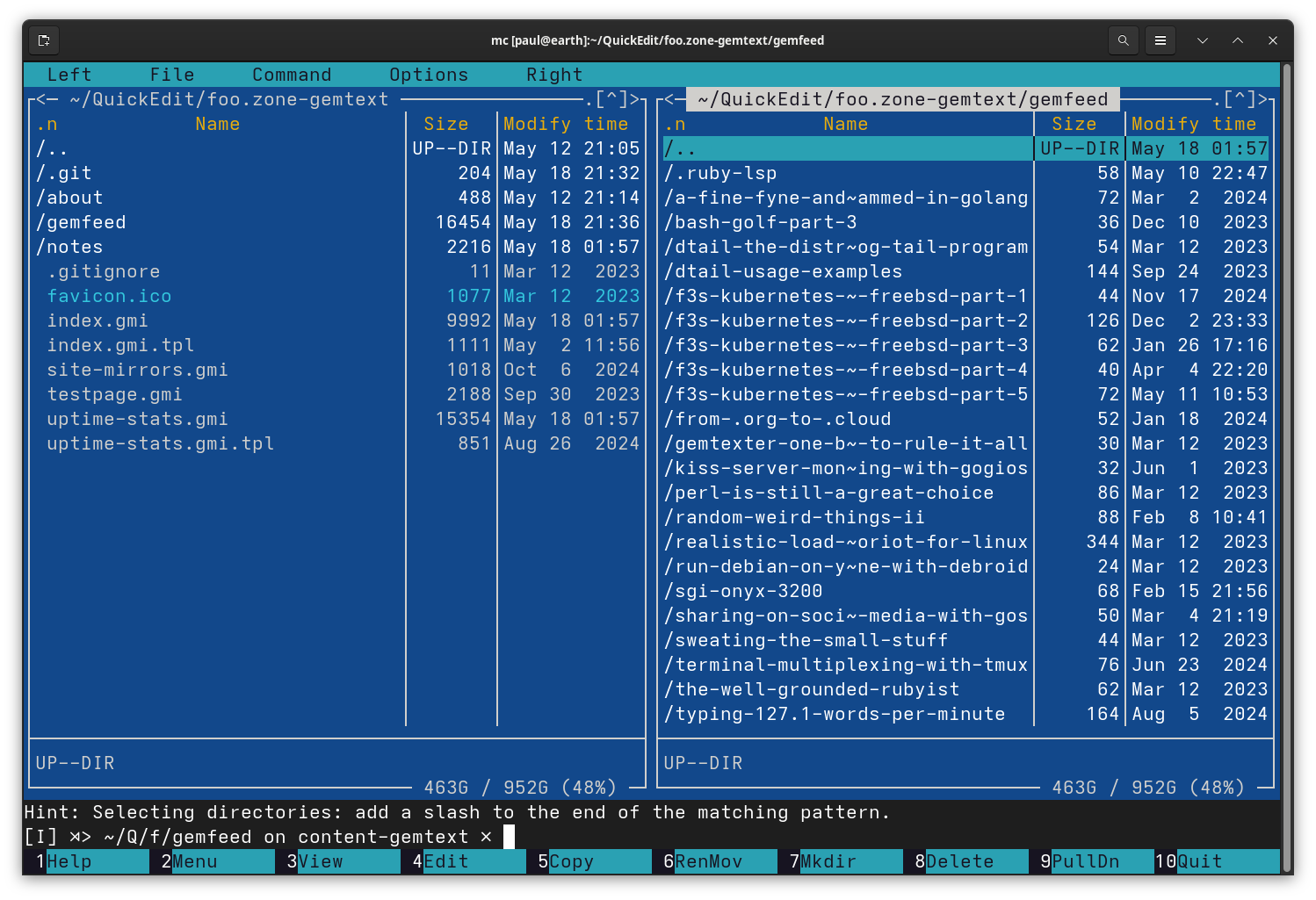

I started using computers as a kid on MS-DOS and mainly used Norton Commander to navigate the file system in order to start games. Later, I became more interested in computing in general and switched to Linux, but there was no NC. However, there was GNU Midnight Commander, which I still use regularly to this day. It's absolutely worth checking out, even in the modern day. https://en.wikipedia.org/wiki/Midnight_Commander #tools #opensource

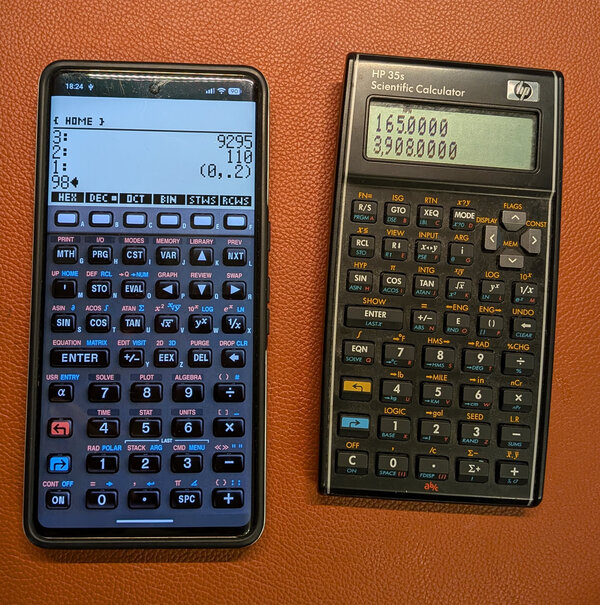

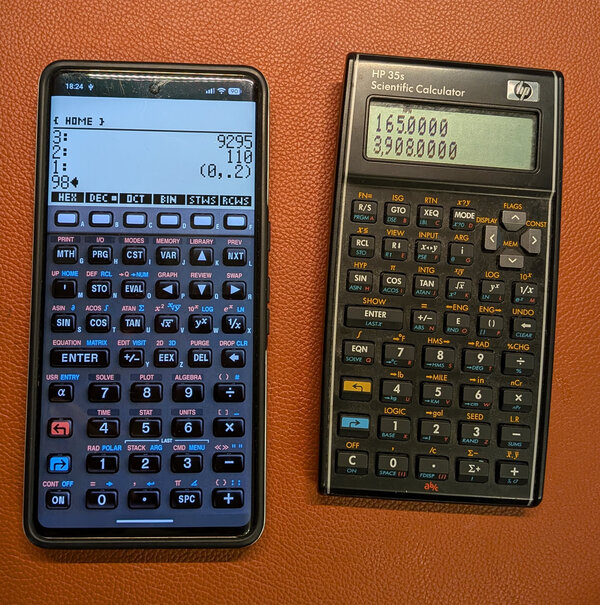

I love these calculators. On the left, the free 48sx app running on Android. On the right, the HP 35s.

Currently building a comic book generator with AI 🤖📚

This (opinionated) microblog's engine can be found at https://codeberg.org/snonux/snonux

My Supernote Nomad just became 2x more useful after the latest firmware upgrade: Sleep screen can now show the last opened page! - Such a small thing can much such a big difference!

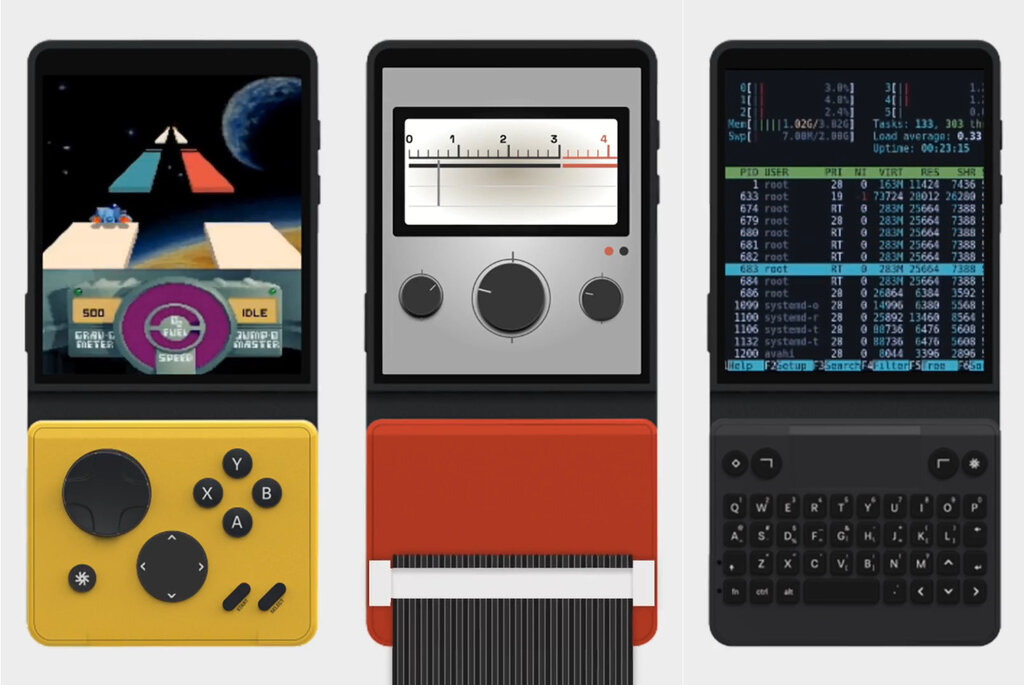

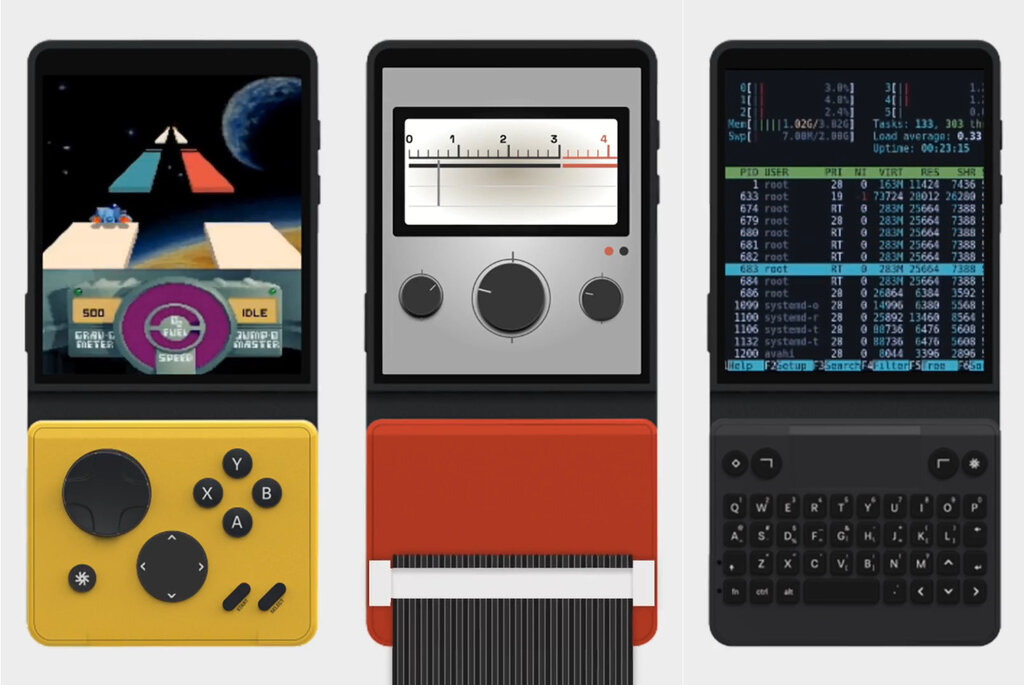

Just backed the Mecha Comet on Kickstarter — a modular Linux handheld computer. Delivery is expected around September 2026. Already have a few small use cases lined up for it. Excited to see how the modular design holds up in practice.

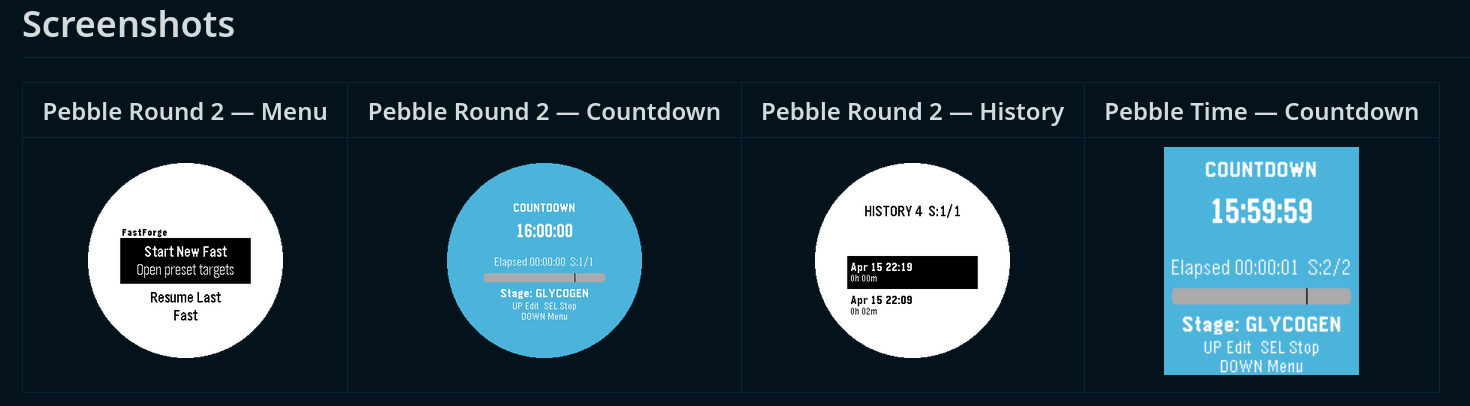

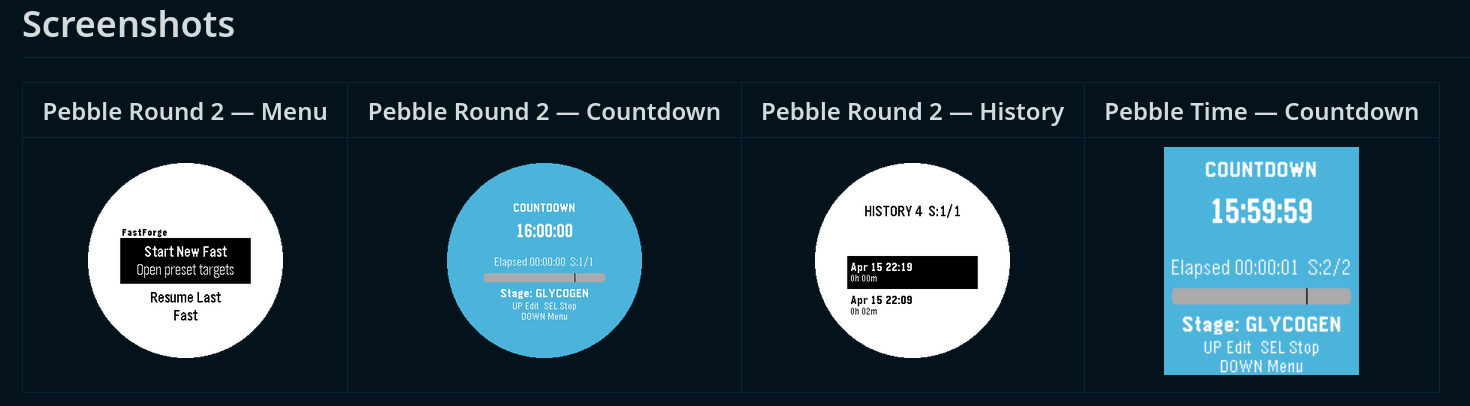

For the Pebble app contest, I built my very first PebbleOS app. I have put it here: apps.repebble.com 🙂 Track intermittent fasting from your wrist!

I don't even own a Pebble watch anymore (I used to, many years ago), but I've preordered the Pebble Round 2, and it should arrive shortly! I don't expect to win anyhing, but this was a good reason to actually build this app now I want to use once my watch arrives!

#pebble #pebbleos #smartwatch #app

I've been playing around with LLMs runnable on local hardware lately to check whether if it is worth to invest 4k in it. So far, the results have been promising, but I'm still expecting a bit better performance and utility for my specific use cases.

- I'd like to have at least 256GB of VRAM (or unified memory) for inference (not like most home-solutions which max out at 128GB)

- I'd like to have at least the same speed as Nvidia's A100

- I'd prefer a Linux based OS, but a Mac would not be a disaster here. Mac Ultras are way too expensive still (especially due to the memory prices).

I don't think there is anything below 5 grand available for now. So for now I'll keep renting my GPUs from Hyperstack for further experimentation and will revisit bying local LLM hardware in a year from now or so!

#llm #inference #hardware #local

This is my first entry here. This microblog entirely runs in my home-LAN on a Raspberry Pi 3 computer.